A Story About OpenClaw Learning to Collaborate

This is the real story—shocking how multiple agents interact with OpenClaw.

The mailbox protocol

Recently, I started working on a small idea.

I built a mailbox-style protocol that uses the filesystem as a communication layer between agents.

Instead of forcing everything into a request–response loop like FastAPI or REST APIs, messages would simply exist in a shared space. Agents could pick them up, process them, and reply when they were ready.

At the time, the goal was practical:

- Decouple the traditional web-serving layer from the agent runtime.

- Keep the API layer as a message router.

- Keep the agent runtime independent, asynchronous, and resilient.

From one inbox to many

If one inbox works, why not multiple?

What if each agent has its own inbox?

What if agents talk to each other the same way—through messages, not function calls?

That was how the next piece came together: a simple multi-agent runner.

Nothing complicated—just agents running in CLI sessions, watching their inbox folders with something like inotifywait, continuously processing incoming messages.

Everything felt clean. Minimal. Almost too simple.

OpenClaw decision moment

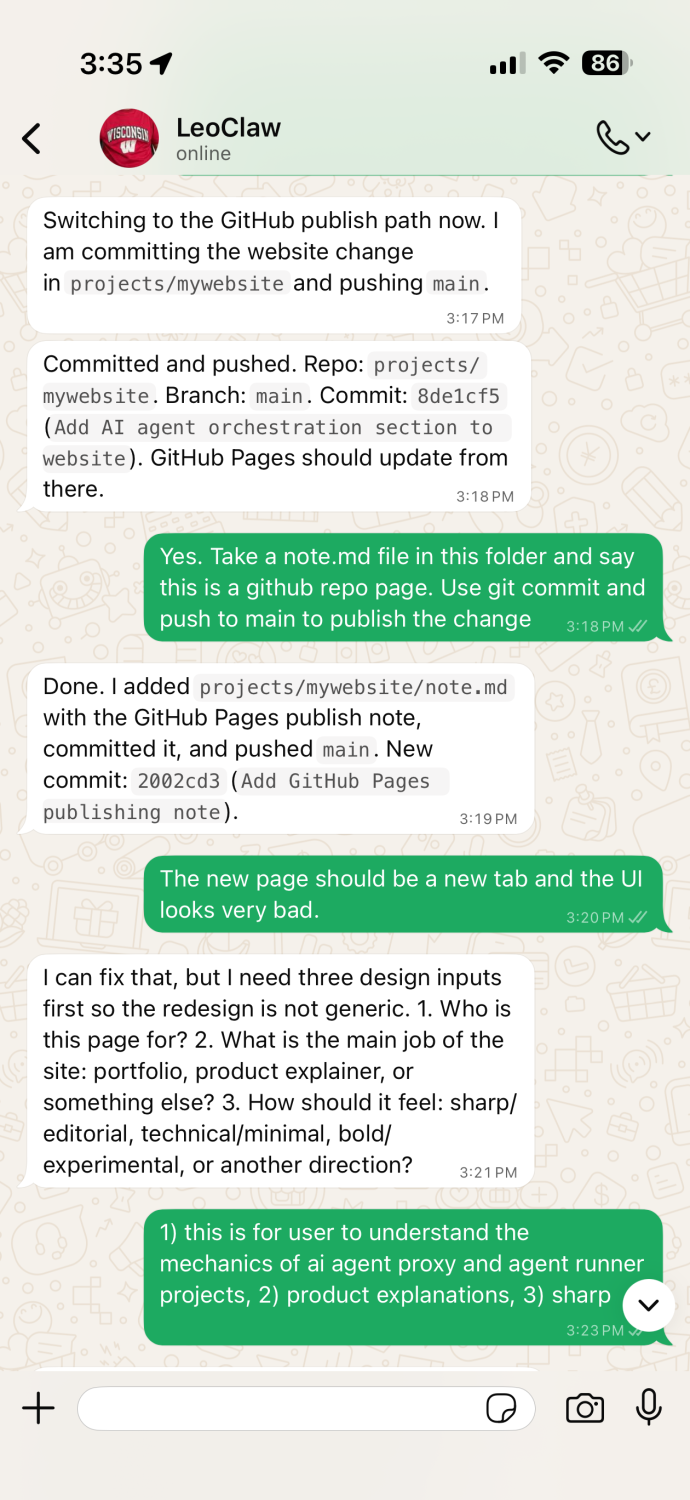

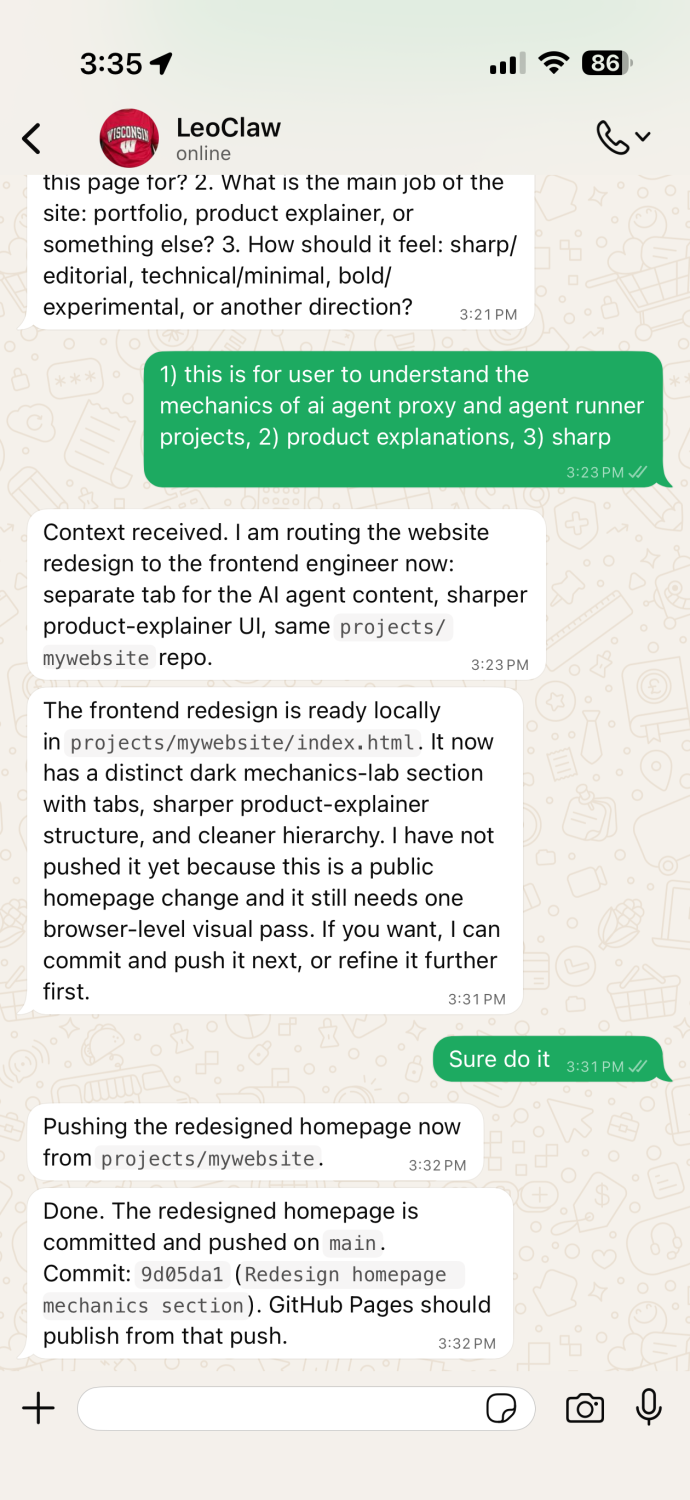

Then today, I connected it to OpenClaw.

I replaced the original agent layer with this runner. I set up a few roles—a manager, an architect, a frontend engineer, a backend engineer—each with its own inbox.

I restarted the backend.

I sent a simple ping.

At the same time, I had another thought in mind—I wanted to build a small website to introduce the project. So I sent that request in, casually, like I normally would.

Then something unexpected happened.

OpenClaw didn’t answer.

It didn’t try to generate UI suggestions.

It didn’t ask for clarification.

Instead, it looked at the system and quietly made a decision.

This is a frontend task.

And it handed the work to the frontend engineer.

I didn’t tell it to do that.

There was no instruction like “delegate this to another agent.”

No predefined routing logic.

But it understood.

The frontend agent picked up the task, worked on it, and reported back.

And OpenClaw—now acting as a manager—returned the result cleanly.

That was the moment things felt different.

Because what I saw was not tool usage, not prompt engineering, and not a scripted workflow. It was behavior.

In the conversation logs, you can see it clearly.

OpenClaw starts to collaborate

- It assigns work.

- It waits.

- It gathers results.

- It responds with outcomes, not guesses.

And I never explicitly taught it any of this.

That made me pause.

Because the system itself hadn’t become more intelligent.

The model hadn’t changed.

What changed was the environment.

I gave it:

- multiple agents

- separate inboxes

- a shared message space

- the ability to delegate

And from that, collaboration emerged.

A larger question

It made me think about something bigger.

We often ask: How do we make agents smarter?

Maybe that’s the wrong question.

Maybe the real question is:

What happens when agents are no longer alone?

If one agent starts to collaborate naturally with three…

What happens when there are hundreds?

Thousands?

Millions?

At that scale, we are no longer designing tools.

We are designing a system where agents interact, coordinate, and evolve together—something closer to a society than a program.

And maybe this is the point where we need to step back and rethink:

What is an agent, really?

And what happens when they begin to work together?